Самые популярные и увлекательные, которые никого не оставят равнодушным. В рейтинг включили разные жанры, поэтому каждый сможет найти что-то подходящее для себя. Объединяет эти игры онлайн — возможность поиграть вместе с друзьями и весело провести время.

Playerunknown`s Battlegrounds

Шедевр, который на момент своего выхода стала прорывом года, признан лучшей мультиплеерной игрой — PLAYERUNKNOWN’S BATTLEGROUNDS. Самая популярная среди платных игр в стиле «Королевская битва», шутер, в котором побеждает последний выживший участник. Группа до 100 человек высаживается на локации 8х8 километров, наполненную разнообразным оружием. Играть можно одному или взяв с собой в компанию напарников.

Здесь играет роль не только снаряжение и навыки стрельбы, но и подход к выбору тактики. При высадке, «мясорубка» начинается в определенных зонах, в которых выше шанс нахождения более крутого снаряжения, но выжить там непросто. Подстраивайтесь под рельеф карты: джунгли или пустыня, врывайтесь в здания или же поджидайте противника в кустах с читерной сковородкой в руках!

В игре помимо стандартных настроек есть возможность выбрать опции сервера, чтобы убрать некоторые преимущества (например, используя вид от третьего лица можно осматривать местность рядом, не показываясь из укрытия). Игра распространяется PC в Steam, XBOX, PS, и на мобильных устройствах Android и iOS.

Игра очень требовательна к «железу», да и не все могли позволить себе ее покупку, поэтому разработчики пошли навстречу аудитории и выпустил облегченную версию PUBG Lite, которая без проблем запустится на слабой технике (существенно сокращены графические детали).

Fortnite

Изначально Fortnite создавался, как симулятор выживания в открытом, но населенном зомби мире от Epic Games. Вначале игроки занимаются исследованием, сбором ресурсов и строительством укреплений, чтобы потом противостоять ордам зомби. Важно кооперироваться с другими выжившими для сбора предметов, которые пригодятся в улучшении вашего форта для отражения большего количества волн противников.

Широкий функционал позволяет редактировать каждую стену вашей базы, размещать лестницы и окна, добавлять крышу в зависимости от потребностей группы.Карта каждый раз генерируется рандомно, что повышает реиграбельность Fortnite.

Главную популярность игре принес режим «Королевской битвы» в Fortnite: Battle Royale, которая в отличие от PUGB распространяется бесплатно по модели free-to-play. Как и в других играх данного жанра, игрокам предстоит поучаствовать в массовой битве на большой карте (соло или в составе группы 2-4 человека). Главная цель — выжить, попутно убивая или избегая встреч с остальными игроками. Постепенно «безопасная» зона сужается, вынуждая игроков сталкиваться лицом к лицу. В ходе поединка можно найти массу разнообразного вооружения, которое даст преимущество над другими игроками. Из основной игры сюда перекочевал элемент строительства, что стало отличительной фишкой среди остальных игр в стиле «Королевской битвы».

С некоторыми ограничениями, но игра доступна не только для владельцев PC, но еще приставок и мобильных устройств IOS. Даже если популярность «Королевской битвы» угаснет, проект все еще будет пользоваться успехом за счет своего первоначального варианта.

DotA 2

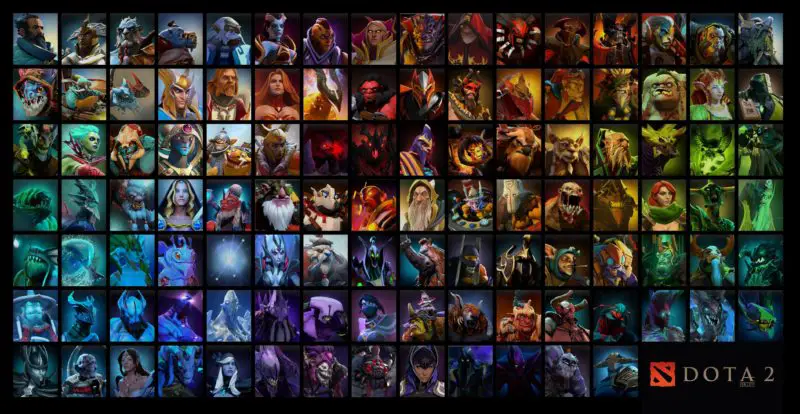

Многопользовательская онлайн игра DotA 2 в жанре MOBA от компании Valve. Из карты-модификации для Warcraft III она превратилась в самостоятельный проект, ставшим одной из самых популярных киберспортивных дисциплин с многомиллионным призовым фондом. При этом игра совершенно бесплатная, а донат влияет только на кастомизацию героев, которых здесь свыше сотни.

На одной карте в смертельном бою предстоит схлестнуться двум командам по пять человек. Каждый герой по-своему уникальный, а различные варианты сборок предметов позволяют не ограничиваться одной ролью. Все герои бесплатны, поэтому баланс никак не нарушается, а победа зависит исключительно от мастерства игроков. Иногда можно затащить в соло катку, но гораздо эффективнее налаживать тимплей между союзниками, потому что DotA 2 все же командная игра.

Регулярно проводятся акции, турниры, события, приуроченные к значимым праздникам, что не дает заскучать игрокам. В Доте ежегодно доступен «Battle pass» в честь крупнейшего турнира The International с множеством заданий и наград. Даже после 1000 часов, проведенных в игре, всегда найдется место чему-то новому, ведь игра постоянно развивается и можно даже сказать, что живет самостоятельной жизнью.

Counter-Strike: Global Offensive

Легендарная игра, на предыдущих версиях которой выросло не одно поколение геймеров — Counter-Strike: Global Offensive от разработчика Valve. Поклонникам серии CS сложно угодить, предыдущая попытка модернизировать игру в версии Sourse провалилась. Базовая концепция игры в CS: GO осталась без изменений: все такое же разделение на команды террористов и подразделений спецназа. Первые развлекаются подрывами и удержанием заложников, а вторые пытаются обезвредить бомбу или освободить гражданских. А иногда команды просто устраивают перестрелку до последнего выжившего.

Популярность CS кроется в ее простоте. Здесь нет никакой прокачки навыков, собирать лут не нужно, карты небольшие (но их много), все игроки начинают бой в равных условиях, а зарабатываются деньги на новое оружие посредством убийств, освобождения заложников или установки бомбы.

GTA Online

Детище Rockstar остается одной из самых продаваемых и популярных игр уже на протяжение почти 5 лет — GTA Online. Игра удивляет с самого начала, настолько необычного редактора персонажей не найти нигде. Вместо того, чтобы просто создавать образ своего героя, игроку предлагается выбрать не только его родителей, но еще бабушку и дедушку. Потом задаете его характер, привычки, развлечения (не обошли стороной даже режим дня).

Сразу после этого игрок попадает в самую гущу событий, с уже знакомыми механиками, локацией и разнообразными занятиями (можно сыграть с другом в теннис или гольф). Да и в целом город, наполненный живыми людьми, раскрывается совершенно иначе, чем в оффлайн режиме с ИИ. К тому же теперь появилась хоть какая-то польза от внешней кастомизации (модный прикид, тюнинг авто и другое) — можно похвастаться в онлайне своей крутизной.

Но за веселье и развлечения приходится платить, а заработать деньги честному игроку никто не даст, поэтому придется взяться за грязную работенку: продавать ворованные машины, грабить магазины и заниматься прочей мелочевкой. Самый большой куш можно сорвать отправляясь с друзьями «на дело», в этом плане Rockstar постарались на славу и предоставили игрокам множество интересных режимов, зависнуть в которых можно на долго. Сколотив приличное состояние есть вариант открыть свой бизнес.

Сюжетная кампания тоже представляет большой интерес, она качественно проработана, а по гуляя по городу можно найти отсылки к предыдущей части. Впервые в серии игры, сюжетную историю придется проходить не одним персонажем, а сразу тремя. На Metacritic игра получила оценку 96 из 100, чем может похвалиться далеко не каждая игра.

World of Tanks

Нестандартный для своего времени формат игры от студии Wargaming.net, который стал основателем жанра аркадного танкового симулятора — World of Tanks. Игра позиционируется как ММО экшн, который включает в себя такие жанры, как стратегия, шутер и собственно ролевая игра. В основе WoT находятся танковые бои онлайн, где игрокам предстоит разделиться на две команды по 15 человек и принять участие в одном из трех стандартных режимов:

- штурм

- захват

- случайный бой

В отличие от остальных игр рейтинга, в World of Tanks на старте игры можно совершенно бесплатно получить бонусы: уникальный премиумный танк, премиум аккаунт, золото (внутртигровая премиумная валюта) и многое другое воспользовавшись нашей инвайт-ссылкой. Увеличить размер бонусов за регистрацию можно введя понравившийся вам инвайт-код из нашего списка.

В игре предоставляется уникальная возможность взять под свое управление легендарные советские танки ИС-3 или Т-34, которые многие раньше могли видеть лишь в экране телевизора; тяжелейший за всю историю танкостроения Второй мировой войны немецкий Maus и знаменитые американские «Шерманы». В World of Tanks представлено свыше 600 единиц техники 11 наций. Разработчики «вдохнули жизнь» не только в исторически существовавшую технику, но воссоздали множество машин исключительно по макетам, эскизам и даже тайным протоколам. Кто бы мог подумать, что в игре появится даже подветка советских двухпушечных танков.

В WoT представлены 5 классов техники, каждый из которых выполняет в бою определенную, свойственную ему роль:

- легкие танки;

- средние танки;

- тяжелые танки;

- ПТ-САУ;

- артиллерия.

World of Warcraft

Нестареющая классика ролевых игр онлайн — World of Warcraft от Blizzard Entertainment. Одна из родоначальников жанра MMORPG, которая впервые вышла в свет еще в далеком 2004 году. За 15 лет она собрала множество наград, в том числе вошла Книгу рекордов Гиннесса, как самая популярная. Несмотря на то, что жанр MMORPG постепенно переходит на Non-target систему, новые проекты создаются на новых движках с максимально крутой графикой, WoW все еще удерживает позиции в то время, как большинство других игр с автозахватом цели канули в прошлое.

Тот факт, что игра все еще остается популярной и сохраняет за собой большую аудиторию объясняется следующими причинами:

- качественно проработанная история вселенной WoW с превосходно выполненными роликами, книгами, рассказами, комиксами. Фильм по вселенной «Варкрафт» стал первым и единственным по видеоиграм с доходом в 400 млн долларов;

- игру постоянно поддерживают новым контентом, а каждые 2 года выходит глобальное обновление (на 2019 год выпущено 7 частей);

- множество занятий, как для поклонников PvE, так и для фанатов PvP:

- совершенствуется графическая составляющая.

Так что же нас ждет в самой игре? Присоединяйтесь к вечному противостоянию Орды и Альянса! Откройте собственную гильдию с друзьями или присоединитесь к уже существующим. Множество классов и рас позволяют комбинировать различные варианты при создании персонажа. А дальше вперед навстречу приключениям, исследовать Азерот — мир бесконечных приключений, эпических сражений и увлекательных историй. Проходите подземелья, побеждайте смертоносных драконов, находите легендарные артефакты! Если захочется тишины и покоя, то можно уединиться на рыбалке или пойти собирать ресурсы. В игре очень развита система крафта и профессий, поэтому мирным занятиям тоже уделяется много внимания.

Path of Exile

Безусловно, многие помнят, играли или хотя бы слышали о серии игр Diablo, 3-я часть которой получилась неоднозначной, через несколько лет после выхода, поддержка от разработчиков осталась исключительно символической. И вот тогда многие игроки обратили внимание Path of Exile от скромной компании Grinding Gear Games, которая примерно ровесница третьей «Дьяблы», но активно развивается, поддерживается обновлениями и при этом распространяется совершенно бесплатно.

PoE завораживает своей мрачной атмосферой, множеством инноваций и на конец 2019 году уже вышло аж 15 крупных дополнений. По сюжету главный герой изгнанник, который должен выживать в темном фэнтезийном мире Рэкласта, подпитывая себя желанием мести тем, кто обрек на подобную участь.

Среди игр подобного жанра Path of Exile имеет несколько особенностей:

- большой выбор персонажей;

- глубокая система развития героя (сотни связок умений в огромном дереве навыков, совмещая которые можно создавать уникальные комбинации);

- продвигаясь по сюжету доступны различные случайные задания, поэтому каждое прохождение будет отличаться от предыдущих (к тому же каждый раз при входе в локацию, уровни процедурно генерируются);

- мощные артефакты (игра построена на получении предметов, которые могут существенно усилить героя);

- соревнования (каждые три месяца начинается новая лига с ценными призами для лучших игроков);

- возможность создать и оборудовать собственное убежище с сотнями декораций;

- интересный энд-гейм контент;

- справедливая бизнес-модель (без Pay-to-Win, за донат можно приобрести только косметические предметы, которые не дадут никакого преимущества по отношению к другим игрокам).

В ноябре 2019 разработчики анонсировали Path of Exile 2, которая скорее станет не отдельной игрой, а большим дополнением, включающим в себя семь новых актов, доступных для прохождения вместе с кампанией оригинала.

Overwatch

Относительно новый проект Blizzard Entertainment, который вышел в 2016 году и выделялся тем, что не имеет никакого отношения к трем основным франшизам компании. Overwatch — онлайн шутер от первого лица, в котором игроки делятся на две команды по 6 человек и вступают в бой между собой. Вначале сражения игрокам предстоит сделать выбор в пользу одного из множества персонажей, которые делятся на три класса:

- урон

- танк

- поддержка

В игре присутствует множество разных режимов, которые не дадут заскучать и привнесут массу разнообразия. Помимо обычных раундовых перестрелок можно выделить:

- захват точек (одна команда защищает позиции, а вторая пытается их захватить);

- сопровождение (одна команда должна гарантировать безопасную доставку груза, а вторая должна помешать этому);

- контроль объекта;

- арены;

- аркады (8 режимов игры против других игроков в режимах раундов).

Overwatch произвела фурор и революцию в жанре шутеров, за что в течение 2016-2017 гг удостоилась множества наград, в том числе: игра года, лучший мультиплеер, лучшая киберспортивная игра.

Hearthstone

Еще одна игра от Blizzard Entertainment (да, умеют они делать годноту), но в этот раз они выбрали нишевый жанр и на момент 2013 года никто не понимал, зачем это нужно такой крупной компании, когда они анонсировали коллекционную карточную онлайн-игру Hearthstone. И вот спустя год с момента релиза Hearthstone стала культовым шедевром в своем жанре не только на PC, но и на мобильных устройствах IOS и Android.

В этом году игре исполнилось 5 лет. За этот временной отрезок было множество попыток создать подобный аналог по такой же схеме монетизации, но ни у кого не получилось достигнуть таких же высот, как проект от Близов. Игра остается «number one» в своем жанре, входит в число киберспортивных дисциплин, а разработчики в течение года выпускают по три платных дополнения (сама игра бесплатная).

Игра создана по мотивам вселенной Warcraft, поэтому игрокам знакомым с WoW будет немного проще освоиться в огромнейшем ассортимент карт. Суть игры вначале сводится к выбору героя сбору собственной колоды под него. Существует множество различных вариантов, поэтому каждый может выбрать стиль под себя: сразу задавить противника картами, перетерпеть и придержать «козыри» под конец, захватить лучшие карты соперника или же дождаться, пока он выставит на стол самых лучших, чтобы уничтожить их мощной магией.

За последние несколько лет игра стала более приветливой для новичков:

- добавлены сезонные награды в зависимости от занятого в рейтинге места;

- новый режим «Потасовка»;

- сделали более щедрой награду на Арене;

- возможность играть случайными актуальными картами.

Читайте далее:

варгейминг скоро вообще загнется с таким отношением к игрокам

А где War Thunder. В списке половиной игор о которых вообще не слышал.

а что с Драгон Эйджем?

В 3-й части был только мультиплеер, на онлайн не рассчитана. А вот в декабре BioWare на The Game Awards анонсировали 4-ю часть, которая выйдет не раньше осени 2021 года. Подробностей пока что никаких нет